Chapter Eight Electromagnetic radiation and radioactivity

CHECK YOUR EXISTING KNOWLEDGE

Introduction

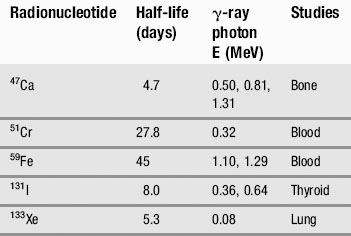

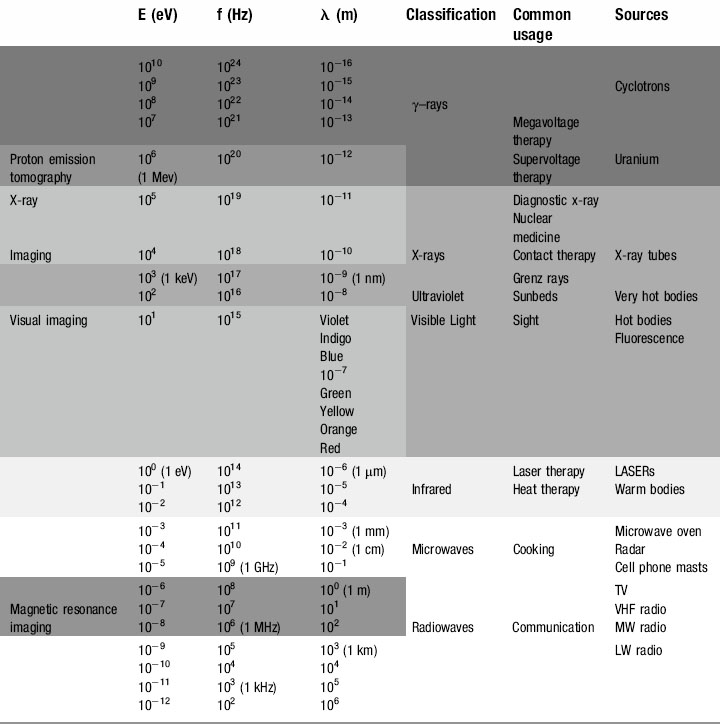

We have already touched briefly on the subject of electromagnetic radiation in the previous two chapters; now it is time to examine this phenomenon in detail. As you will see from Table 8.1, as clinicians, we encounter almost the full range of the electromagnetic spectrum both in everyday and medical life. From tuning our car radio to a long-wave station on the way into work, to treating patients undergoing radiotherapy using gamma rays, we use electromagnetic radiation to see, treat and diagnose patients.

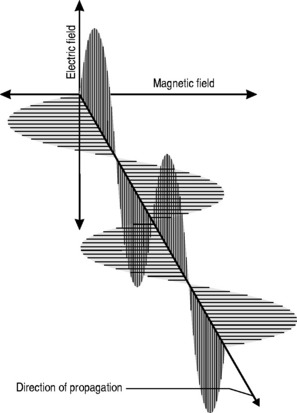

All electromagnetic radiation is made up of particles called photons, which have neither mass nor charge, and move at a constant speed of 2.997 × 108 ms−1. By now, you will hopefully have mastered the ‘double-think’ of wave-particle duality and the unsettling concept of probability waves and have no difficulty in accepting that electromagnetic radiation consists of waves that, unlike the waves we encountered in Chapter 6, which required a medium for their propagation, can travel readily through a vacuum. The waveform consists of oscillating, perpendicular electrical and magnetic fields: the magnetic field produces an electrical field and the electrical field produces a magnetic field, making the system self-sustaining. The direction of propagation is at right angles to the two fields (Fig. 8.1).

This relationship is one of inverse proportionality; as can be seen from Figure 8.1: if you double the wavelength, you half the frequency and vice versa. High frequency equals low wavelength; low frequency equals high wavelength.

The equation that defines this relationship mathematically is called the wave equation:

where λ and f have already been defined and v = the speed of the wave.

Therefore, the wave equation for electromagnetic radiation can be written:

The electromagnetic spectrum

Radio waves

At the bottom end of the energy range of electromagnetic radiation are those used for everyday media and communication. It is just as well that these photons have such minuscule amounts of energy; our bodies are penetrated by them every millisecond of every day but with typical energies of 10−12 to 10−6 eV they have insufficient energy to cause any damage to the atoms from which we are composed (remember, even at the top end of their energy range, 10−6 eV is equal to 1.6 × 10−25 J !).

Radio (and television) signals are generated by high-frequency alternating currents flowing through the aerial of a radio transmitter. If the receiving aerial, which can be a simple piece of wire, is placed in the path of the radiation, an electromotive force is induced, causing a current to flow. This current, the input transducer that was discussed in Chapter 7, is then amplified in the radio circuitry and the output transducer converts the signal to sound waves.

Microwaves

Although some sources define microwaves as part of the radio wave spectrum, their uses are specific and different. Measured in centimetres and millimetres, microwaves were originally a neglected part of the electromagnetic spectrum. This changed during the Second World War, when British physicists and engineers secretly developed RADAR (RA(dio) D(etection) A(nd) R(anging)), enabling them to detect and intercept the waves of German bombers that had been devastating the country’s military, industrial and social infrastructure.

Infrared radiation

Produced by moderately hot objects, infrared ‘heat rays’ are generated by moderately energetic atoms, in much the same way that visible light is produced, albeit with lower energies and longer wavelengths. Classic examples are the conventional ovens discussed above or fires, be they coal, wood or electric; they all radiate infrared, which can be sensed by heat detecting nerve endings in our skin, giving a sensation of warmth. Although mammals cannot see infrared, some fish and most snakes and scorpions have eyes capable of sensing the infrared portion of the spectrum; this means that they can effectively find their prey by the heat that their body radiates, which is particularly useful for hunting in the dark.

For the clinician, infrared therapy is a familiar, if largely unproven, technology that is commonly used to treat chronic non-healing wounds and musculoskeletal pain as well as a diverse number of other conditions from haemorrhoids to leukaemia. The technology behind this and the evidence base for infrared therapies is discussed in more depth in Chapter 10.

Ultraviolet

Seasonal affective disorder (SAD) can also be combated by ultraviolet radiation. Everyone shows some physiological response to the diminished light levels of the winter season, and the effect is magnified in populations nearer to the arctic circles. The lack of light entering the eye decreases the stimulation of the pineal gland and reduces production of the neurotransmitter serotonin. Although this is a useful evolutionary device causing torpor, reduced metabolism or even hibernation in the winter months when food sources are low and energy needs to be preserved, it can in some individuals trigger clinical depression. Wintering in tropical or sub-tropical climes or ski resorts (snow reflects ultraviolet better than longer wavelengths, which is why skiers get tanned, or sunburned, so easily) used to be the treatment of choice for the ‘December blues’. However, the advent of ultraviolet lamps and, more recently, ‘daylight’ electric bulbs have helped reduce the dependency on antidepressant medication for the less affluent sections of society.

X-rays

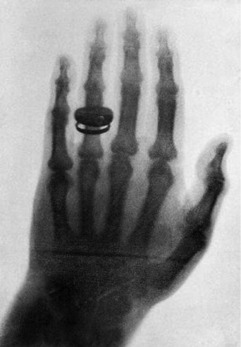

Within a month, he had determined most of the ray’s properties and taken the first-ever clinical radiograph, an image of his wife’s left hand, clearly showing the phalanges and her wedding ring (Fig. 8.2).

X-RAY DIFFRACTION

Röntgen was unable to make x-rays demonstrate diffraction, and therefore erroneously concluded that they were not in the same class of phenomenon as light. In fact, x-rays do diffract but, because of their short wavelength, no ordinary diffraction grating will cause them to do so. Instead, the gaps in atomic structures such as metallic lattices and crystals will cause x-ray diffraction to occur and they later became a vital research tool in the understanding of the properties of matter. Although x-ray crystallography has been used to determine the atomic and molecular structures of thousands of materials, perhaps the most famous instance was Rosalind Franklin, who used the technique to image DNA. Unfortunately for her, Crick and Watson beat her to the interpretations of her findings. In 1901, Röntgen, was awarded the first-ever Nobel Prize for physics for his discovery. In 1962, Crick and Watson, together with their colleague Maurice Wilkins, won the Nobel Prize for physiology and medicine. Rosalind Franklin never received the honour; Nobel Prizes cannot be awarded posthumously and, in 1958, she had died at the tragically young age of 37 from cancer most probably brought on by exposure to the x-rays with which she worked.

Because of this, x-rays are dealt with in a separate section later in the chapter.

Fact File

Fact File Fact File

Fact File CLINICAL FOCUS

CLINICAL FOCUS Fact File

Fact File CLINICAL FOCUS

CLINICAL FOCUS DICTIONARY DEFINITION

DICTIONARY DEFINITION

Fact File

Fact File CLINICAL FOCUS

CLINICAL FOCUS